Roulette Technologies is a product-focused software company building and operating customer-facing systems in the cloud. As its platform moved from experimentation into steady production usage, one architectural question surfaced quickly:

How should the network be structured so that failures are contained, costs remain predictable and a small engineering team can still operate the system confidently?

Together we'll walk through how Roulette Technologies evaluated VPC segmentation options, the constraints that shaped the decision and why a deliberately balanced approach was chosen.

Editorial context: Roulette Technologies documents production-informed decisions based on a combination of direct experience and observed industry patterns. Specific details are representative, not exhaustive.

The Situation Roulette Technologies Found Itself In

Roulette Technologies had reached product–market fit.

- Traffic was consistent.

- Customers depended on the system.

- Failures were no longer theoretical... this is LIVE!

At the same time, the organization was intentionally lean:

- A team of three to five generalist engineers

- No dedicated platform or networking team

- A tightly controlled AWS budget

- Revenue that was meaningful, but not tolerant of waste

The system itself followed a familiar shape a web application with clear application and data layers but the network design now mattered in ways it hadn't before.

Any decision made at this stage would have long-term consequences:

- It would define how far failures could spread

- It would determine how difficult incidents would be to debug

- It would either enable or block future compliance efforts, most importanly

- It would influence how costs scaled with traffic

Framing the Real Problem

Rather than starting with AWS best practices or reference diagrams, Roulette Technologies framed the problem more narrowly:

How can a production VPC be segmented to contain failures and security incidents without exceeding cost limits or operational capacity?

More human and easy to understand that way. This framing intentionally excluded several tempting directions:

- Microservices versus monolith debates

- Zero-trust networking

- Service meshes

- Multi-region architectures

- Kubernetes networking abstractions

Those concerns belonged to a future version of the company.

The goal here was survivable production infrastructure, not theoretical perfection.

The Constraints That Shaped Every Decision

A few realities dominated the discussion:

Network costs scale with traffic, not intent

The most dangerous costs were not hourly infrastructure charges, but per-GB data processing and transfer.

Operational mistakes are expensive

A design that saves money but amplifies human error is not cost-effective.

Debugging speed matters more than elegance

At 2am, clarity beats cleverness if you'll agree with me

Security is about containment, not absolutes

Eliminating all risk was unrealistic the efficency of a machine can't be 100% but limiting the blast radius was achievable.

With these constraints in mind, Roulette Technologies evaluated several approaches.

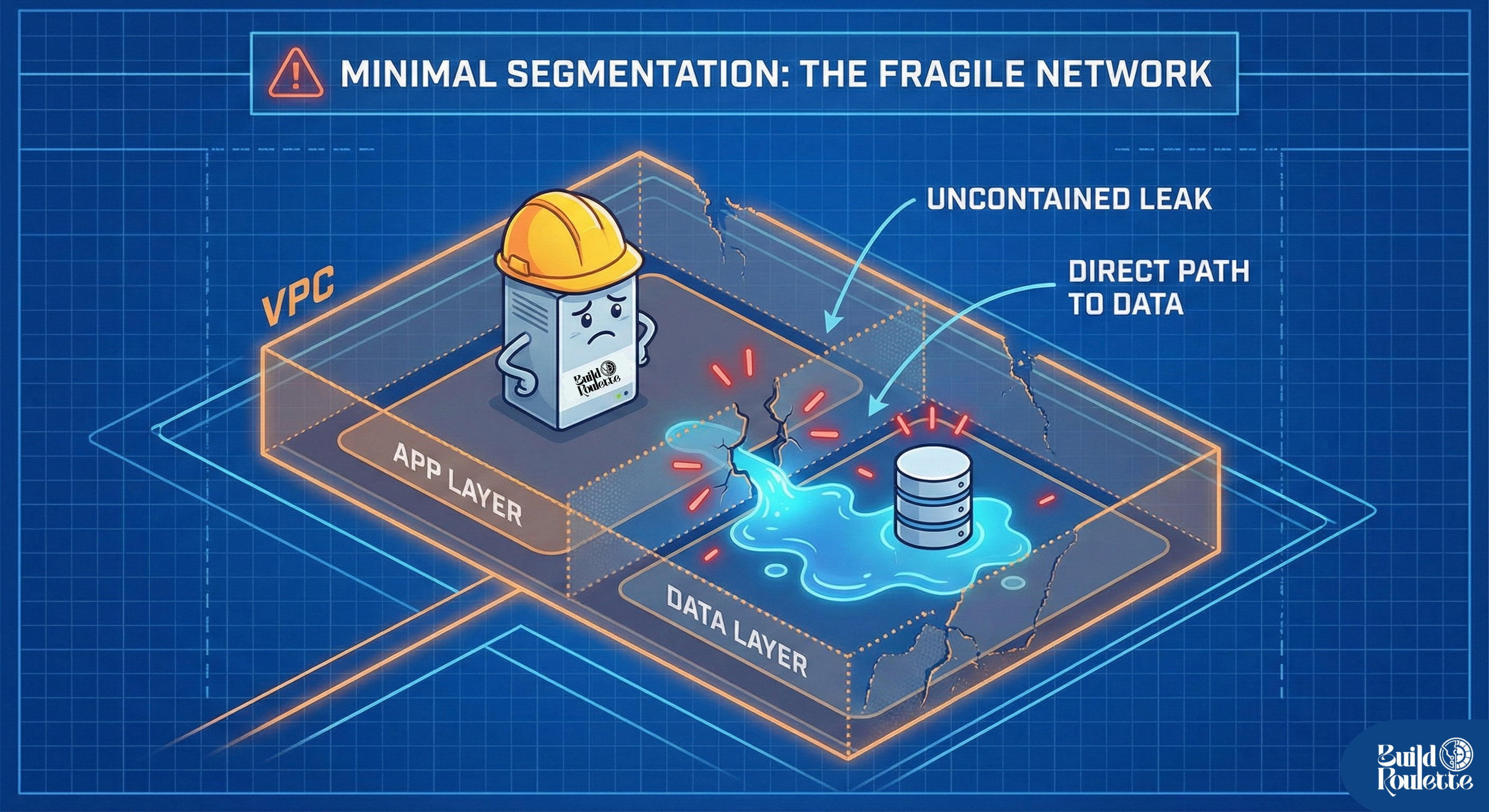

The Simplest Path: Minimal Segmentation

The first option was straightforward:

- Public subnets for ingress

- Private subnets for everything else

- Application and data living side by side

This approach was attractive for its simplicity. Routing was easy to reason about. Costs were minimal at low traffic volumes.

But when failure scenarios were examined, the weaknesses were obvious:

But when failure scenarios were examined, the weaknesses were obvious:

- A compromised application had a direct path to data

- A single misconfiguration could expose the entire system

- There was no clean way to isolate damage during an incident

For a production system handling customer data, this fragility was unacceptable.

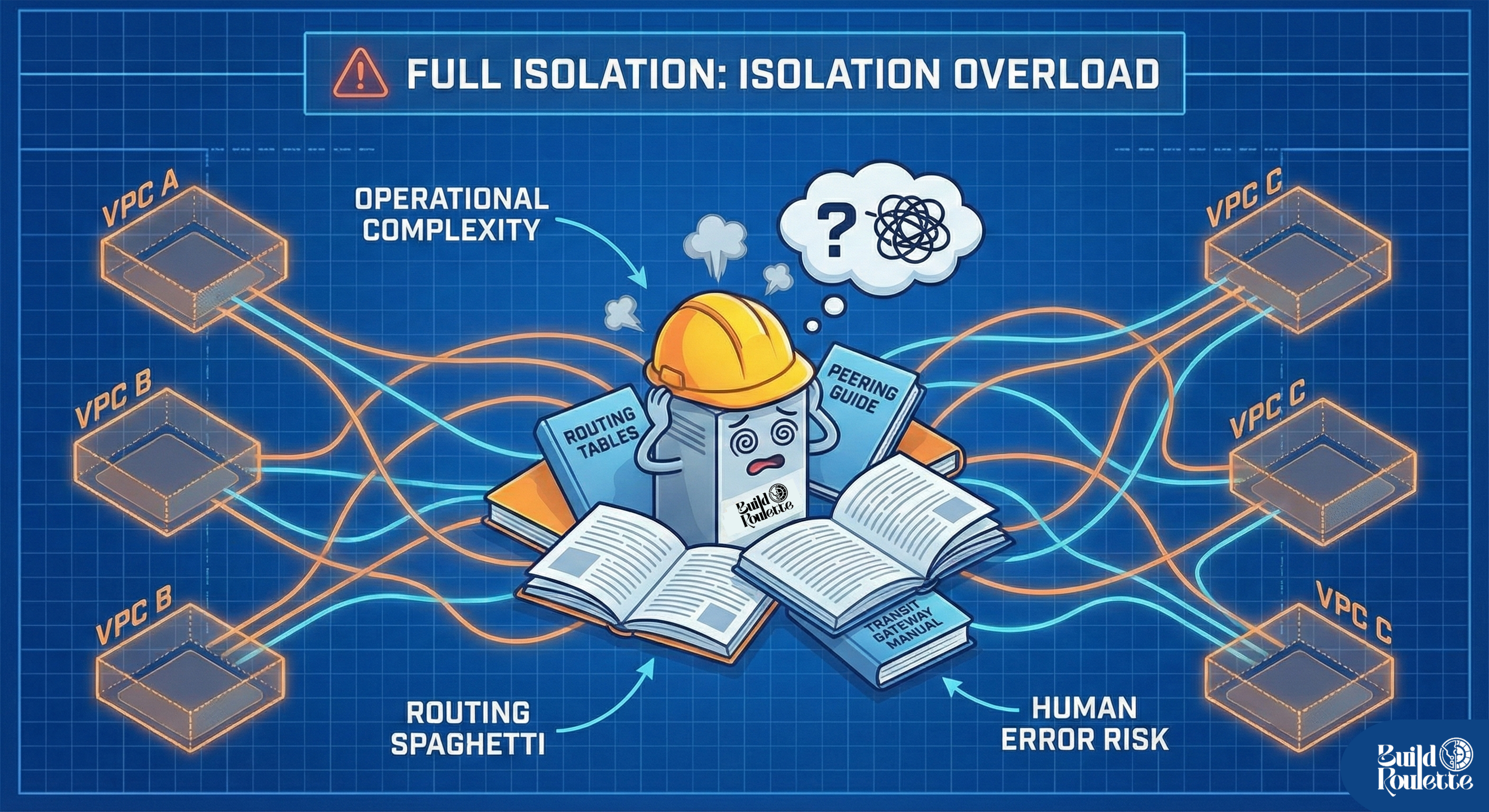

The Opposite Extreme: Full Isolation Everywhere

At the other end of the spectrum was heavy segmentation:

- Multiple VPCs

- Strict boundaries between environments

- Deep isolation at every layer

On paper, this offered excellent containment.

In practice, it introduced a different kind of risk:

In practice, it introduced a different kind of risk:

- Complex routing paths

- Higher operational overhead

- Debugging that required specialized expertise

- Costs that scaled non-linearly with complexity

For a small team, this level of isolation created more operational risk than it removed.

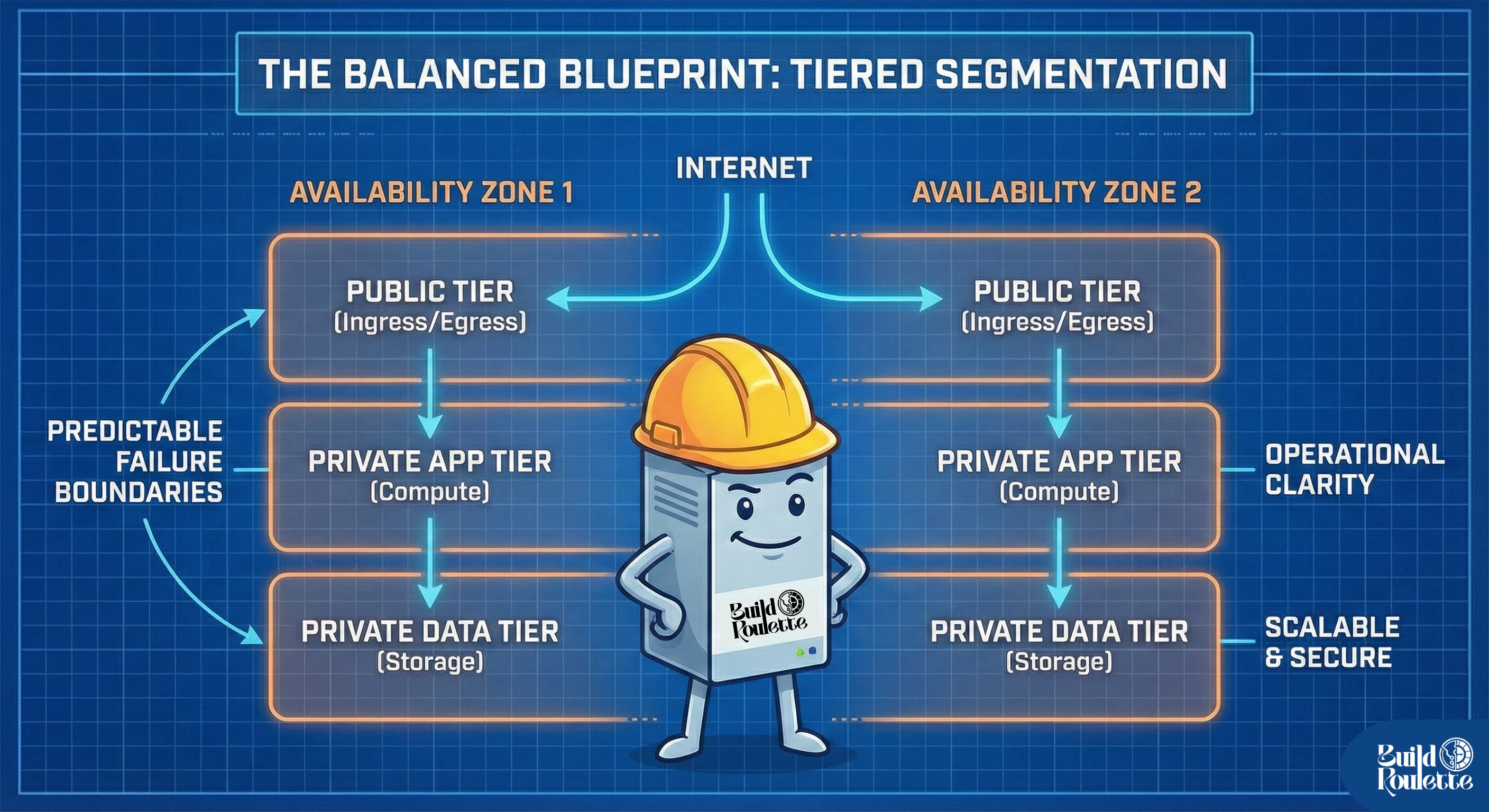

The Approach Roulette Technologies Chose

Ultimately, Roulette Technologies settled on a tiered segmentation model:

- A public tier for controlled ingress and egress

- A private application tier for compute and background work

- A private data tier with no direct internet access

- The same structure replicated across two availability zones

This approach was intentionally conservative.

This approach was intentionally conservative.

It did not aim for maximum isolation. It aimed for predictable failure boundaries, observable cost growth and operational clarity.

- Failures in one tier would not automatically cascade into others.

- Traffic paths were explicit and reviewable.

- Costs grew primarily with usage, not configuration mistakes.

Most importantly, engineers could understand the system under pressure.

Why This Balance Worked

From a security standpoint, sensitive data lived behind explicit network boundaries.

From a cost standpoint, the dominant drivers data processing and transfer were visible and controllable.

From an operational standpoint, incident response followed a clear mental model.

The architecture also left room to evolve:

- Additional segmentation could be introduced later

- Compliance-driven controls could be layered on

- Multi-VPC or multi-region designs could be justified when needed

Nothing about the design closed doors prematurely.

Accepted Risk, Documented Intentionally

Roulette Technologies did not pretend this design eliminated risk.

It explicitly accepted:

- Some lateral movement potential within the application tier

- A single VPC as a shared fate boundary

- Heavy reliance on security groups and infrastructure-as-code discipline

These risks were documented, monitored and tied to clear revisit conditions.

Undocumented risk creates surprises. Documented risk creates options.

When the Decision Would Be Revisited

The company agreed to re-evaluate the architecture if:

- Network costs grew disproportionately relative to traffic

- Compliance requirements became mandatory

- The engineering team grew large enough to support deeper specialization

- A real security incident exposed weaknesses in containment

- The system needed to expand across regions

Until then, this design represented the right trade-off for the company's stage.

What This Story Is Really About

This article is not about VPCs It is about:

- Designing within constraints instead of ideals

- Treating cost as a first-class architectural concern

- Balancing security with human operability

- Making decisions that can evolve instead of turning to blocker in the long run

That is the mindset Roulette Technologies applied — and the mindset this article is meant to illustrate.

Final takeaway

Good infrastructure is not defined by how advanced it looks, but by how well it matches the scale, risks and people responsible for operating it.