As Roulette Technologies transitioned from controlled beta to live production, the system was responding predictably. The fundamental workflows were steady and all customer-facing channels performed as expected. There was no repetition in operational incidents that needed attention.

The first real concern surfaced beyond the application.

The first real concern surfaced beyond the application.

In a routine monthly AWS expenditure evaluation, a significant increase in observability costs was revealed. CloudWatch usage had increased at a higher rate than any other service, accounting for nearly one-third of total cloud expenditure. This expenditure wasn't associated with system outages, incident volume or higher error rates. It was associated with default configurations that AWS had set for its services, taking a more extensive data collection approach regardless of cost.

Editorial context:

Build Roulette documents production-informed decisions based on a combination of direct experience and observed industry patterns. Specific details are representative, not exhaustive.

All log groups were configured with the Standard ingestion class, even if the log data would rarely, if ever, be queried. Retention policies for the logs were configured to “Never Expire” allowing storage costs to accumulate quietly over time. Simultaneously, metrics with a 1-minute high-resolution category were enabled for the majority of the fleet, transforming otherwise free primary metrics monitoring into a continuing expense.

The system was observable in the broadest sense, but much of the telemetry data it gathered was not very operationally relevant. Successful requests, background noise and routine health checks were handled equally (in terms of their priority, cost and storage) as failed requests. Eventually, monitoring spend was progressing to approach the cost of their corresponding computation resources.

THE OBJECTIVE: ACHIEVING SUSTAINABLE OBSERVABILITY

The team at Roulette Technologies revisited their observability construct with a precise goal in mind: maintaining fast detection and effective incident response while removing all non-substantive telemetry efforts.

The team at Roulette Technologies revisited their observability construct with a precise goal in mind: maintaining fast detection and effective incident response while removing all non-substantive telemetry efforts.

It wasn’t about reducing the volume of data collected but it was more about controlling data itself before it entered the system. Filter-first architecture allows observability to scale based on system health instead of traffic volume.

This restructure was directed by three basic constraints:

1. Operational simplicity

The engineering team is comprised of five generalists and does not operate a dedicated SRE function. While various self-hosted observability stacks had been evaluated such as Prometheus and Grafana, ongoing maintenance requirements, infrastructure management, and on-call overhead exceeded what could reliably be supported by the team. Any resolution implemented was required to remain AWS-managed, low-touch and operationally predictable.

2. Metrics-first detection

Detection is based entirely on metrics. Logs are stored for focused investigation and traces facilitate dependency and latency analysis. Automated alerting is knowingly decoupled from log scanning to escape constant ingestion and query costs linked directly to detailed logging.

3. Automated governance

The design prevented the need for manual cleanups and reactive cost controls. The observability system needed to include obligatory filtering, retention, and escalation that would remain effective as traffic grew and as services evolved, without requiring engineers to take action during incidents.

THE STRATEGY: TECHNICAL IMPLEMENTATION AND TRADE-OFFS

To manage telemetry growth, Roulette Technologies established a set of targeted changes without replacing the existing observable stacks. The aim was to ensure incident visibility is maintained and there is a constraint on data ingestion, storage, and analysis expenses.

The strategy prefers operational signals to exhaustive history and uses telemetry data on the basis of its contribution to detection, investigation, or dependency analysis due to the expectation that not all information will provide equal value during operation.

These modifications have all been carried out using AWS-managed services, which eases any additional system overhead.

Paths Intentionally Avoided

The use of other observability platforms such as Prometheus and Grafana was assessed but ruled out. These provide robust cost control but come with infrastructure management complexities that are at present out of teams capabilities.

Having a self-managed observability stack would have implied that cost savings would be traded for increased engineer time. This trade-off would not be justified for a team of that size without an SRE team.

Meanwhile because Roulette Technologies is an AWS Native product, cost controls within CloudWatch using ingestion tiers, filters and other Managed Services remain applicable.

All the arrangement of applications occurs using OpenTelemetry compatible standards, allowing future adoption of managed observability platforms without significant refactoring of the application.

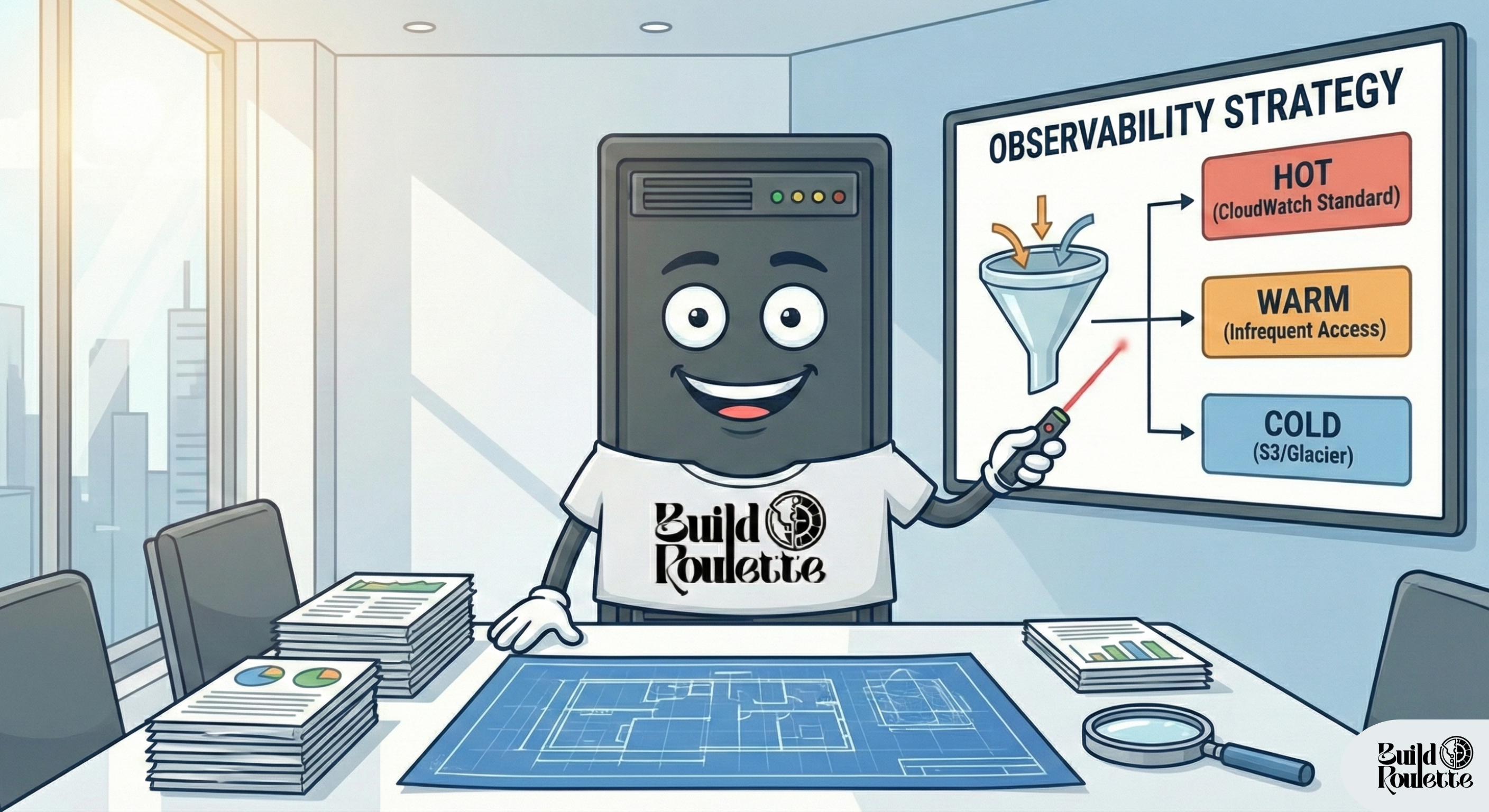

Method 1: Implementing a Tiered Logging Model

Roulette Technologies set up a tiered logging model that aligns log storage and query costs with the pattern of operational usage. Logs are categorized based on the frequency at which they would be accessed and their role at incident response.

Roulette Technologies set up a tiered logging model that aligns log storage and query costs with the pattern of operational usage. Logs are categorized based on the frequency at which they would be accessed and their role at incident response.

- Standard Class: Production logs that power real-time metric filters or need to have near immediately visibility remain in the Standard class (for example sub-second Live Tail utilization).

- Infrequent Access (IA) Tier: Application logs that have general uses and usual system events are routed to this tier, where the cost for storing and ingesting is much lower.

- Trade-off: The IA tier comes with a cost trade-off - higher query and retrieval costs. This was acceptable given the workflow of operational troubleshooting, which cares more about recent failures and targeted queries rather than broad historical log analysis.

Method 2: Edge-Filtering via the CloudWatch Agent

Cloudwatch costs are dominated by the costs of per-GB ingestion and analysis. To reduce these costs, the filtering option was implemented before the telemetry data actually hit the AWS-managed endpoints.

Cloudwatch costs are dominated by the costs of per-GB ingestion and analysis. To reduce these costs, the filtering option was implemented before the telemetry data actually hit the AWS-managed endpoints.

CloudWatch Agent configurations for the EC2 instance included filter_expressions with regular expressions to filter out INFO- and DEBUG-level messages like HTTP 200 or 300 requests.

As a direct result of dropping high-volume, low-value telemetry data at an "instance" level, ingestion costs were significantly reduced, while maintaining error and failure signals necessary for incident response.

Method 3: Metrics for Detection, Logs for Investigation

All automated alerting is exclusively driven by AWS CloudWatch Metrics. Non-critical services are supported with the free basic monitoring at 5-minute resolution, with detailed metrics available at 1-minute resolution being held in reserve for the critical transactional path. With CloudWatch Dashboards, there was access to Golden Signals such as latency, error rate and throughput, which were collected per critical service in real time and without relying on ad-hoc log queries.

Metric Filters identify structured numerical data in Standard-class log information, permitting the discovery of application-level failure patterns without retaining detailed log information itself.

Method 4: Adaptive Distributed Tracing

In the case of Roulette Labs' microservice architecture, distributed tracing is required for both dependency and latency analysis, but tracing of all requests is too costly.

AWS X-Ray was deployed with a baseline sampling rate of 5% for healthy requests. Targeted sampling ensures 100% of the requests that result in 4xx or 5xx responses are captured.

This strategy maintains the same kind of visibility to failures and critical paths but avoids the cost of recording routine, successful traffic.

Method 5: The "Financial Circuit Breaker"

To avoid unexpected telemetry spikes that may grow out of control, a financial measure was introduced.

An AWS Budget is associated with an SNS Topic, which targets a Lambda function when the daily spend for observability services is more than 120 percent of the forecast, and then updates a global parameter with AWS Systems Manager Parameter Store.

This metric is monitored by applications, automatically decreasing log output level to CRITICAL in case of breach, limiting further cost exposure until remediation is done.

Incident Response Worflow

The detection of incidents is driven by CloudWatch Metric Alarms, whereas CloudWatch Dashboards are employed for quick service-level troubleshooting, with AWS X-Ray traces used to identify dependency and latency issues.

CloudWatch Logs Insights targeted queries are run against the Standard-class logs to confirm root cause. Infrequent Access logs are queried only when additional historical context is required.

This workflow allows quick investigation while maintaining a low level of unnecessary log scanning during normal operations.

STRATEGIC OUTCOMES: ALIGNING VISIBILITY WITH VALUE

The filter-first architecture guarantees that observability costs scale in line with actionable events instead of raw traffic. Key outcomes include:

The filter-first architecture guarantees that observability costs scale in line with actionable events instead of raw traffic. Key outcomes include:

Predictable Unit Economics

Logs with a high volume are moved to the Infrequent Access (IA) tier with a retention policy of seven days, transforming storage cost into a predictable unit cost.

Increased Signal Density

Agent-side filtering helps eliminate background noise of routine heartbeats and health checks, establishing a clear flow of useful information for faster “root cause” analysis.

Detection over Investigation

Alerts generated by this process focus on metrics-first and therefore minimize generic log scans as a detection mechanism. Log collection is only used as a mean to conduct investigations, keeping investigations streamlined and still sufficiently traceable.

This approach aligns cost with operational value, preserves visibility for key services, and enables rapid response with fiscal discipline.

ACKNOWLEDGED STRATEGIC TRADE-OFFS

The architecture permits controlled operational gaps for maintaining cost discipline:

Context Gap

Censoring INFO/DEBUG messages makes it difficult to review each successful transaction, which may impede the thorough evaluation of potential delays in the occurrence of errors.

Variable Retrieval Costs

In addition to information accessibility, IA tier logs result in lower daily storage costs but may entail higher costs for historical searches, which is acceptable.

Tracing Granularity

Sampling 5% of requests through X-Ray provides adequate coverage but occasionally, latency increase in healthy requests might not be traced.

Instrumentation Adjustments

Engineers may need to adjust log verbosity or tracing frequencies during ongoing investigations which may demand adjustment to maintain a cost-effective balance.

This approach balances operational effectiveness with predictable costs, ensuring the team can respond efficiently without initiating unexpected financial risk.

FUTURE ARCHITECTURAL TRIGGERS

This approach is appropriate given Roulette Labs’ current scale. The appropriate considerations that dictate the necessity to use an advanced observability stack are:

1. Metric Growth

When custom metrics increase to the point that CloudWatch costs approaches a cost similar to that of a dedicated Amazon Managed Prometheus cluster.

2. Resolution Limits

Where 5-minute basic monitoring is inadequate to meet high frequency transactional service needs requiring response times less than 1 minute.

3. Audit and Compliance Needs

For scenarios where significant multi-month historical queries are necessary due to regulatory and compliance issues, bringing in the S3/Athena/IA model is less cost-effective than a dedicated OpenSearch cluster.

These triggers describe clear and measurable criteria by which a decision can be made as to whether a more complex solution is appropriate.

FINAL TAKEAWAY

The key to good observability is to measure its effectiveness, which is not the quantity of data collected but the quality of decision-making support provided by the collected data. Roulette Labs took a disciplined, automated, and cost-conscious approach to guarantee that they only pay for the insights they need.

By treating observability as a finite resource, the company maintains a high-density signal for operational stability while protecting capital. This strategy provides clear visibility into system health while avoiding unnecessary cost and/or operational overhead.